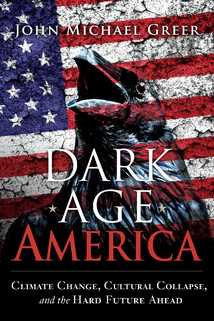

It’s been a few weeks since the conversation on this blog veered away from the decline and fall of American empire to comment on points raised by the recent Age of Limits conference. Glancing back over the detour, I feel it was worth making, but it’s time to circle back to that earlier theme and take it one step further—that is, to shift from how we got here and where we are to where we are headed and why.

That’s a timely topic just at the moment, because events on the other side of the Atlantic have placed some of the crucial factors in high relief. A diversity of forces have come together to turn Europe into the rolling economic debacle it is today, and not all of them are shared by industrial civilization as a whole. Still, a close look at the European crisis will make it possible to make sense of the broader predicament of the industrial world, on the one hand, and the way that predicament will likely play out in the collapse of what remains of the American economy on the other.

Let’s begin with an overview. During the global real estate bubble of the last decade, European banks invested heavily and recklessly in a great many dubious projects, and were hit hard when the bubble burst and those projects moved abruptly toward their real value, which in most cases was somewhere down close to zero. Only one European nation, Iceland, did the intelligent thing, allowed its insolvent banks to fail, paid out to those depositors who were covered by deposit insurance, and drew a line under the problem. Everywhere else, governments caved in to pressure from the rentier class—the people whose income depends on investments rather than salaries, wages, or government handouts—and from foreign governments, and assumed responsibility for all the debts of their failed banks without exception.

Countries that did so, however, found that the interest rates they had to pay to borrow in credit markets quickly soared to ruinous levels, as investors sensibly backed away from countries that were too deeply in debt. Ireland and Greece fell into this trap, and turned to the IMF and the financial agencies of the European Union for help, only to discover the hard way that the "help" consisted of loans at market rates—that is, adding more debt on top of an already crushing debt burden—with conditions attached: specifically, the conditions that were inflicted on a series of Third World countries in the wake of the 1998 financial crash, with catastrophic results.

It used to be called the Washington Consensus, though nobody’s using that term now for the "austerity measures" currently being imposed on the southern half of Europe. Basically, it amounts to the theory that the best therapy for a nation over its head in debt consists of massive cuts to government spending and the enthusiastic privatization, at fire-sale prices, of government assets. In theory, again, debtor countries that embrace this set of prescriptions are supposed to return promptly to prosperity. In practice—and it’s been tried on well over two dozen countries over the last three decades or so, so there’s an ample body of experience—debtor countries that embrace this set of prescriptions are stripped to the bare walls by their creditors and remain in an economic coma until populist politicians seize power, tell the IMF where it can put its economic ideology, and default on their unpayable debts. That’s what Iceland did, as Russia, Argentina, and any number of other countries did earlier, and it’s the only way for an overindebted country to return to prosperity.

That reality, though, is not exactly welcome news to those nations profiting off the modern form of wealth pump, in which unpayable loans usually play a large role. Whenever you see the Washington Consensus being imposed on a country, look for the nations that are advocating it most loudly and it’s a safe bet that they’ll be the countries most actively engaged in stripping assets from the debtor nation. In today’s European context, that would be Germany. It’s one of the mordant ironies of contemporary history that Europe fought two of the world’s most savage wars in the firt half of the twentieth century to deny Germany a European empire, then spent the second half of the same century allowing Germany to attain peacefully nearly every one of its war aims short of overseas colonies and a victory parade down the Champs Élysées.

Since the foundation of the Eurozone, in particular, European economic policy has been managed for German benefit even—as happens tolerably often—at the expense of other European nations. The single currency itself is an immense boon to the German economy, which spent decades struggling with exchange rates that made German exports more expensive, and foreign imports more affordable, to Germany’s detriment. The peseta, the lira, the franc and other European currencies can no longer respond to trade imbalances by losing value relative to the deutschmark now that it’s all one currency. The resulting system—combined with the free trade regulations demanded by economic orthodoxy and enforced by bureaucrats in Brussels—has functioned as a highly efficient wealth pump, and has allowed Germany and a few of its northern European neighbors to prosper while southern Europe stumbles deeper into economic collapse.

In one sense, then, it’s no wonder that German governmental officials are insisting at the top of their lungs that other European countries have to bail out failing banks and then use tax revenues to pay off the resulting debt, even if that requires those countries to follow what amounts to a recipe for national economic suicide. The end of the wealth pump might not mean the endgame for Germany’s current prosperity, but it would certainly make matters much more difficult for the German economy, and thus for the future prospects not only of Angela Merkel but of a great many other German politicians. Now even the most blinkered politician ought to recognize that trying to squeeze the last drop of wealth out of southern Europe is simply going to speed up the arrival of the populist politicians mentioned a few paragraphs back, but I suppose it’s possible that this generation of German politicians are too clueless or too harried to think of that.

Still, there may be more going on, because all these disputes are taking place in a wider context.

The speculative bubble that popped so dramatically in 2008 was by most measures the biggest in human history, considerably bigger than the one that blew the global economy to smithereens in 1929. Even so, the events of 1929 and the Great Depression that followed it remain the gold standard of economic crisis, and the managers of the world’s major central banks in 2008 were not unaware of the factors that turned the 1929 crash into what remains, even by today’s standards, the worst economic trauma of modern times. Most of those factors amount to catastrophic mistakes on the part of central bankers, so it’s just as well that today’s central bankers are paying attention.

The key to understanding the Great Depression lies in understanding what exactly it was that went haywire in 1929. In the United States, for example, all the factors that made for ample prosperity in the 1920s were still solidly in place in 1930. Nothing had gone wrong with the farms, factories, mines and oil wells that undergirded the American economy, nor was there any shortage of laborers ready to work or consumers eager to consume. What happened was that the money economy—the system of abstract tokens that industrial societies use to allocate goods and services among people—had frozen up. Since most economic activity in an industrial society depends on the flow of money, and there are no systems in place to take over from the money economy if that grinds to a halt, a problem with the distribution and movement of money made it impossible for the real economy of goods and services to function.

That was what the world’s central bankers were trying to prevent in 2008 and the years immediately afterward, and it’s what they’re still trying to prevent today. That’s what the trillions of dollars that were loaned into existence by the Fed, the Bank of England, and other central banks, and used to prop up failing financial institutions, were meant to do, and it may well be part what’s behind the frantic attempts already mentioned to stave off defaults and prevent banks from paying the normal price for the resoundingly stupid investments of the former boom—though of course the unwillingness of bankers to pay that price with their own careers, and the convenience of large banks as instruments of wealth pumping, also have large roles there.

In 1929 and 1930, panic selling in the US stock market erased millions of dollars in notional wealth—yes, that was a lot of money then—and made raising capital next to impossible for businesses and individuals alike. In 1931, the crisis spread into the banking industry, kicking off a chain reaction of bank failures that pushed the global money system into cardiac arrest and forced the economies of the industrial world to their knees. The world’s central bankers set out to prevent a repeat of that experience. It’s considered impolite in many circles to mention this, but by and large they succeeded; the global economy is still in a world of hurt, no question, but the complete paralysis of the monetary system that made the Great Depression what it was has so far been avoided.

There’s a downside to that, however, which is that keeping the monetary system from freezing up has done nothing to deal with the underlying problem driving the current crisis, the buildup of an immense dead weight of loans and other financial instruments that are supposed to be worth something, and aren’t. Balance sheets throughout the global economy are loaded to the bursting point with securitized loans that will never be paid back, asset-backed securities backed by worthless assets, derivatives of derivatives of derivatives wished into being by what amounts to make-believe, and equally valuable financial exotica acquired during the late bubble by people who were too giddy with paper profits to notice their actual value, which in most cases is essentially zero.

What makes this burden so lethal is that financial institutions of all kinds are allowed to treat this worthless paper as though it still has some approximation of its former theoretical value, even though everyone in the financial industry knows how much it’s really worth. Forcing firms to value it at market rates would cause a catastrophic string of bankruptcies; even forcing firms to admit to how much of it they have, and of what kinds, could easily trigger bank runs and financial panic; while it remains hidden, though, nobody knows when enough of it will blow up and cause another financial firm to implode—and so the trust that’s essential to the functioning of a money economy goes away in a hurry. Nobody wants to loan money to a firm whose other assets might suddenly turn into twinkle dust; for that matter, nobody wants to let their own cash reserves decline, in case their other assets turn into the same illiquid substance; and so the flow of money through the economy slows to a creep, and fails to do the job it’s supposed to do of facilitating the production and exchange of goods and services.

That’s the risk you take when you try to stop a financial panic without tackling the underlying burden of worthless assets generated by the preceding bubble. Much more often than not, in the past, it’s been a risk worth running; if you can only hold on until the impact of the panic fades, economic growth in some nonfinancial sector of the economy picks up, the financial industry can replace its bogus assets with something different and presumably better, and away you go. That’s what happened in the wake of the tech-stock panic of 2000 and 2001: the Fed dropped interest rates, made plenty of money available to financial firms in trouble, and did everything else it could think of to postpone bankruptcies until the housing bubble started boosting the economy again. It doesn’t always work—Japan has been stuck in permanent recession in the wake of its gargantuan 1990 stock market crash, precisely because it didn’t work—but in American economic history, at least, it’s tended to work more often than not.

Still, there was a major warning sign in the wake of the tech-stock fiasco that should have been heeded, and was not: what boosted the economy anew wasn’t an economic expansion in some nonfinancial sector, but a second and even larger speculative bubble in the financial sphere. I discussed in posts late last year (here

and here) ecological economist Herman Daly’s comments about shortages of "bankable projects"—that is, projects that are likely to bring in enough of a return on investment to pay back loans with interest and still make a profit for somebody. In an expanding economy, bankable projects are easy to find, since it’s precisely the expansion of an expanding economy that makes a positive return on investment the normal way of things. If you flood your economy with cheap credit to counter the aftermath of a speculative bubble, and the only thing that comes out of it is an even bigger speculative bubble, something significant has happened to the mechanisms of economic growth.

More specifically, something other than a paralysis of the money system has happened to the mechanisms of economic growth. That’s the unlearned lesson of the last decade. In the wake of the 2001 tech stock crash, and then again in the aftermath of 2008’s even larger financial panic, the Fed flooded the American economy with quantities of cheap credit so immense that any viable project for producing goods and services ought to have been able to find ample capital to get off the ground. Instead of an entrepreneurial boom, though, both periods saw money pile up in the financial industry, because there was a spectacular shortage of viable projects outside it. Outside of manipulating money and gaming the system, there simply isn’t much that makes a profit any more.

Next week we’ll discuss why that is happening. In the meantime, though, it’s important to note what the twilight of investment means for the kick-the-can strategy that’s guiding the Fed and other central banks in their efforts to fix the world economy. That strategy depends on the belief that sooner or later, even the most battered economy will finally begin to improve, and the upswing will make it possible to replace the temporary patches on the economy with something more solid. It’s been a viable strategy fairly often in the past, but it worked poorly in 2001 and it doesn’t appear to be working at all at this point. Thus it’s probable that the Fed is treading water, waiting for a rescue that isn’t on its way; what will happen as that latter point becomes clear is something we’ll be exploring at length later on in this series of posts.

****************

End of the World of the Week #27

Those of my readers who, as I was, were young and impressionable in the early 1970s may well remember The Jupiter Effect, a once-famous book by John Gribben and Stephem Plagemann which saw print in 1974. Gribben and Plagemann were astrophysicists, which gave their claims a good deal of apparent credibility. They had noticed that on March 10, 1982, most of the planets in the solar system would be aligned with one another, and Jupiter—the most massive of the planets—would be part of the alignment as it passed through its closest approach to Earth. This additional gravitational tug, they proposed, would set off sunspots, solar flares, and earthquakes, making life interesting for our species and just possibly ending life on Earth.

The Jupiter Effect quickly developed a following, and joined a flurry of other apparently scientific predictions of imminent doom in circulation at that time. I’m not sure how many people were still waiting for catastrophe when 1982 came around, but any who were must have been sorely disappointed; the sunspot count for March 10 was nothing out of the ordinary, the solar flares and earthquakes failed to put in an appearance, and the only known result of the Jupiter Effect was that high tides that day were all of 0.04 millimeters higher than they would otherwise have been.

117 comments:

6/20/12, 10:39 PM

Duncan Kinder said...

6/20/12, 11:00 PM

John Michael Greer said...

Duncan, that's merely because governments spend so much money on price supports for drugs -- that's what police, prisons, etc. are in economic terms. Lacking those government subsidies, the illegal drug industry would have trouble turning a profit, too.

6/20/12, 11:07 PM

Kieran O'Neill said...

The premise is that if people are still going to invest in pension funds, they'd be best off finding ways to invest in local businesses and cooperatives, rather than having their money vanish into the black hole of the financial industry. Apart from the gains in accountability, this has the advantage that if the system falls apart, at worst you've contributed to keeping things running in your town, and at best if the business survives, they may remember your shares and revalue them into whatever new currency emerges.

But yes, I think the days of investment in the sense of Wall Street are numbered. If nothing else, just by observing that pretty much all the companies in the financial industry, as well as most of the big manufacturers, are almost entirely owned by each other in a giant tangled web seems to indicate that that world is rapidly ceasing to have any connection to the economy the rest of us exist in.

6/20/12, 11:17 PM

goedeck said...

Natural Gas: Where Endless Money Went to Die

6/20/12, 11:39 PM

Thijs Goverde said...

We've been riding that tiger ever since; I think the Dutch politicians were the only ones yelling louder (blush, now!) than the Germans in the slo-mo car crash that is the contemporary EU economy.

Regarding the belief that things are bound to 'return to normal' in the end, on a more personal level: My inlaws, i.e. the well-off part of my family, are clinging to their stock for dear life, because 'it would be madness to sell now that shares are so low'. In the end, I expect they'll be okay: some companies are going to come out of this smelling of roses while the rest goes down in flames. As my in-laws have spread their investments broadly, the former category may just make up for losses in the latter.

My own parents are out of debt and looking to buy some land with the cash they can spare.

At the moment, nobody's selling...

except maybe for the government, that's thinking of cutting its budget by selling some bits of nature reserve.

So it goes.

At least my parents will use it to try something in the organic/permaculture range of gardening.

I shudder when I think of the larger-scale looting that we'll see ere the dust settles.

6/21/12, 12:18 AM

Mean Mr Mustard said...

“...allowing Germany to attain peacefully nearly every one of its war aims short of overseas colonies and a victory parade down the Champs Élysées.

Surely reserved sun loungers (a German tourist cliché) and Liberation day, 1994, count?

More seriously, it’s interesting to note that the whole European project was originally presented as an alternative to recurring wars and destitution. Too bad the partners turned out to still be too self-interested and incompatible after all.

Mustard

6/21/12, 1:27 AM

CGP said...

Regarding the substance of the problems it seems that greed and hubris, coupled with the desire to exploit and profiteer, are at the heart of so many of today’s ills. Perhaps this has always been the case, more or less, but it certainly is the case today. JMG, do you see this tendency to exploit and profiteer as being an inevitable and unshakeable part of human nature (at least collectively) or do you see this as a product of a system (with that system being “free” market economics in today’s world)?

6/21/12, 2:34 AM

Justin Hunneyball said...

Have you read Sacred Economics by Charles Eisenstein. I found it a very inspiring book. He has a compelling vision of how to transform our monetary system into one that is aligned with natural flows and processes, and with sustaining and encouraging life, rather the running counter to living processes, which is currently the case. It is available to read on-line for free (or a donation) at sacred-economics.com I would be interested in your opinion.

Justin

6/21/12, 2:43 AM

Jim Brewster said...

"The process would be complicated and unpopular, especially among those with the most to lose, but it might help get us past the wall. It would reduce economic activity significantly—that’s going to happen anyway, even in the best instance—but it would also remove the overhang of debt that threatens to bring down the entire economy."

Sounds better than chaotic default, doesn't it? I think when things get desperate enough, ideas like this will get more serious consideration. After all, desperation is what allowed FDR to muscle through the New Deal, and even if it didn't save the economy, it might have staved off a revolution.

6/21/12, 3:31 AM

Jennifer D Riley said...

To my thinking, there is no place in the finance sector for any instrument to be "squared." The concept of the exponent belongs in science and math. It's simply a short hand device to save you writing numerals off the right hand side of the page. Thus, there is no reason for the finance sector to have a CDO squared or a credit default swap or anything else "squared."

6/21/12, 3:55 AM

GuRan said...

David Lawson has a nice pithy critique: Eight Elementary Errors of Economics over at Steve Keen's debtwatch.

6/21/12, 4:24 AM

Castus said...

I can't for the life of me understand when people look at national debts and judge that they will eventually be paid back. Is this some kind of bizarro world where nations whose compound interest on debt is more than their taxed income are still seen as likely to pay it back to whomever they owe? It's farcical, frankly, and what it means is that the world is full of this "free money", free because it will never be paid back.

Do you think this will even matter for future generations, or do you eventually see that system as collapsing completely and being replaced with something more common sense?

Regards,

Castus

6/21/12, 4:59 AM

Jason Heppenstall said...

Signs that all is not well are everywhere – and I’ll be writing plenty more about this on my blog on Sunday. One hillside covered in newly-built apartments advertised them for sale at 39,000 euros due to a bank liquidation. A couple of years ago they would probably have been 100,000 or more. And the massive motorways that carve their way through the rugged hills of the south, built at immense cost over the last decade, remain unfinished in parts and are more or less empty of traffic. These huge prestige projects now look like a national embarrassment.

Spain is taking a temporary respite from the troubles by watching the Euro 2012 football (soccer) tournament – which they may well win. One of the commercials they air during the half-time breaks shows Spaniards feeding newspapers packed with dire economic headlines into a shredder – which then turns them into confetti that is blasted over a jubilant crowd. It says something like ‘live for the moment’. The advert is for Coca Cola.

I have just returned to Denmark and all the talk is of this summer’s bikini fashions and holiday destinations. Crisis – what crisis? It pays to be a cosy neighbor of Germany!

6/21/12, 5:14 AM

xhmko said...

As for Germany, it's interesting to consider how Germany manages to sustain such genuinely awesome advances in green socio-economic policies. I wonder if it's anything to do with the weapons sales and puppeteering of "southern" Europe's infrastructure. Hmmm.

6/21/12, 5:24 AM

Alex Boland said...

I just read Jorgen Randers' new book. It was notably less pessimistic than your own prognostications (though not exactly a feel-good story either to say the least). Have you read it? If so, what were your thoughts on it?

6/21/12, 5:27 AM

Mister Roboto said...

6/21/12, 5:47 AM

RPC said...

6/21/12, 5:56 AM

xhmko said...

6/21/12, 6:05 AM

Unknown said...

Thanks for your insightful analysis and hard work. I look forward to next week's post.

Ruddite

6/21/12, 6:12 AM

jean-vivien said...

http://www.reuters.com/article/2012/06/21/us-europe-trains-idUSBRE85K0F620120621

"New EU states struggle to spend Brussels billions"

6/21/12, 6:58 AM

Twilight said...

In fact there was a huge boom in a nonfinacial sector, namely the housing market. We manufactured an enormous number of homes that litter the countryside, employed a lot of labor, etc. The housing bubble probably was closer to a traditional economic boom than was the tech stock bubble where there was actually nothing of value. However, the size of the associated tertiary economic bubble dwarfed that secondary economic activity, and new construction was only a part of it. Sales of existing homes didn't require construction.

Also people bought a huge amount of products with their new found wealth. Except for part of the automobile market those products were manufactured elsewhere, so the money to buy and power them went overseas. That had to have created a few "bankable projects" building factories in Asia. In part countries like China have been offsetting energy costs with very low labor costs, but that strategy has probably run its course. You can only drive the labor costs so low, and the energy costs keep rising so it doesn't balance out any more. And we cannot afford to keep buying and powering that stuff.

At the heart of it, I think the limitation on bankable projects stems from unacceptably high energy costs, and from the accumulated unpaid costs of energy over the past decades. It's not enough to make money the old fashioned way of using natural resources and added labor to produce a “value added” output, and to live on those profits. That's because we also have to pay the accumulated debt we ran up after the US peaked in energy production (and the imperial wealth pump isn't helping enough anymore). Few real projects can produce that much profit, it's too high a burden. So we play games with money instead, but that produces nothing of real value.

6/21/12, 7:02 AM

Yupped said...

I’m looking forward to the discussion on lack of profitable projects. It’s certainly not like we are short of practical things to do to prepare for the future. I know a part of the profitability challenge it is the cost of resources, in particular of energy. I also wonder how much the assumed value of people’s time is a factor. Now that I’m producing a good amount of my own food and some other household essentials, I’ve realized how “uneconomic” it is for me to do it, although I do it anyway. Even if I price out my own time at minimum wage, I could still buy a lot of spinach and kale for the time it takes me to prepare the soil, sow, weed and harvest. But I do it anyway because I want to and I enjoy it in various ways. And I expect it will become more and more important in future. But imagine, say, a lawyer making hundreds of bucks an hour trying to justify growing their own greens! Stepping out of the mindset of putting a monetary value on every activity is a very liberating thing to do.

6/21/12, 7:09 AM

EchosRevenge said...

6/21/12, 7:24 AM

Thomas Daulton said...

Of course -- spoiler alert -- the mysterious reason you allude to why very little exists, outside of paper financial games, which can turn a concrete profit anymore, is because we're entering an age of contraction. Mr. Hubbert, please call your office!

When I read your title, though, the first thing I thought you would be writing about is the decline of the entire 20th-Century American business model where somebody with a good idea rounds up investors, hires a few helpers with investor money, pays them to implement the idea, and then sits back and watches the royalties roll in without ever doing a hard day of work. Apart from renting out property -- running or participating in a "puffball" business like that seems to be the gateway/initiation ritual into becoming a member of the rentier class. Or at least, adopting their values, even when the actual business falls flat. It's sort of a more respectable-looking version of your classic soap-selling pyramid scheme.

Your discussion of why investors won't invest outside the banking system covers that from the "macro" perspective, but I would love it if you wrote a few words from the "street" perspective, as it were. I know so very many people, not just older relatives but people my own age in their late 30s/early 40s, who remain unshakably convinced that model is still in force. That seems to be the only way these people believe money comes into existence, so they can't even conceive of a business that doesn't work that way. When I suggest something like "What if I actually hand-built and sold aquaponics tanks from my garage for a living," these people shake their heads with mild condescension and pity, and tell me "There's no money in that". Working with your own two hands, instead of managing other people's work, is looked at as a dead end.

From my viewpoint, having worked for private companies, for the government, and having started two businesses (that never turned a profit), I think this old investment model is dead and gone. If I may get on a soapbox, my friends and relatives don't seem to understand a few things. #1 is that economies are not static, they evolve like ecosystems, and numerous highly efficient predators have evolved over the past 10-20 years who eat puffball businesses like that alive. They're mostly financial scams, but as a business owner you will also be inundated with pretty-looking offers to buy custom software, put your logos on schwag, sponsor conferences and get "exposure" in return for your money, etc etc.

The more fundamental problem, which nobody with a pet "good idea" can ever be convinced of, is that there is absolutely no shortage of good ideas in the world. In fact there's a glut, and by the laws of supply and demand, the value of good ideas has plummeted from what they think it is. ("But not _my_ idea!! _I_ have a _good_ idea!!") What there's a shortage of is people willing to do hard work with their hands.

Here's a comic that explains the business model I'm talking about: http://xkcd.com/1060/

I'd love it if you commented on what an actual individual business would look like from the perspective of a worker or individual owner, instead of just the "macro". Thanks!

6/21/12, 7:41 AM

K & C said...

I understand that the financial mechanisms that caused the crash of 1929 were undermining things long before that, but certainly the parallel timelines of the Depression and the Dirty Thirties can't be coincidental.

I'm no economist, but it seems to me that widespread failure of staple crops must have had a not insignificant impact upon commodity markets and thus complicated any recovery.

Makes me wonder how the agricultural strip-mining of our remaining topsoil is going to effect things this time around.

6/21/12, 7:54 AM

Dale NorthwestExpeditions said...

6/21/12, 8:11 AM

Steve From Virginia said...

Basically, the GD was World War One prosecuted by non-violent, economic means: the financiers against the working classes, driving them toward communism with one hand while condemning them for it with the other.

Finance indeed 'froze up' as those with pennies held them tightly denying them to the monopolists who had been offering 'frauds and circuses' for decades, then a terrible war.

The central banks were irrelevant during the 1920s and 30s (as they largely are today). Their leverage was minuscule, even the Bank of England was helpless. People hoarded, keeping funds from the financiers. When governments ended the interdependent specie system and replaced it with paper, the public held the paper. Without Hitler and Japan the American industrial capitalists would have been starved to death.

As it was, the Depression did not end the second war (an interruption) but rather undermined by the widespread introduction of television which paraded consumer product lifestyles in front of every viewer.

TV convinced the average citizen that the big businesses were their friends, not monsters out to pillage and destroy them ... unearned goodwill that in the US exists to this day.

6/21/12, 8:54 AM

Robo said...

Too bad the banking system doesn't have to observe Ohm's Law like the power companies do.

6/21/12, 9:00 AM

russell1200 said...

One point.

Thumbing your nose at the IMF is not a free ride. Both Argentina and Russia who you note are major exporters. Thus they have a way to trade for the inports that they need. In the case of Argentina that was still not enough and they have variously grabbed up pension funds, etcetera.

So thumbing your nose at the IMF is a little like declaring personal bankruptcy. It is great if you have an income stream that you can then balance your life style to, but if you are simply going to keep on going down the same road.....well I guess we will see with Argentina.

6/21/12, 9:20 AM

Renaissance Man said...

Likewise, Germany, while enjoying the benefits of being one of the powers in the E.U. has not been sucking the mediterranean countries as dry as you imply. Indeed, many of the countries that did join the E.U. found their standard of living rose. The problems afflicting Greece, Ireland, Portugal, Spain and Italy stems from their politicians; seeing their standards of living rise, populist politicians acted as if these standards would continue rising faster than they actually were and, in an effort to buy popular support, overspent in anticipation of even more accellerated growth. They were criticized for this quite roundly, in fact. Part of the concommittant problem is their own governments didn't - and don't - effectively collect the taxes owed. In Italy and Greece, for example, lying on tax forms is almost the second national sport after football. This failure to collect has a major effect on balancing books. None of these countries would be in trouble if their elected politicians hadn't basically ignored that little problem, but that wouldn't get anyone re-elected, would it? One of democracy's weak points.

But this is a minor point to what you are really getting at: the problem that the productive economy, which, as you pointed out in a much earlier essay, depends ultimately on energy, has NOT been growing as it ought; that an increasing percentage of the GDP in the U.S. since the Regan era has been fuelled by financial sleight-of-hand, to the point where the finance sector, which was, in 1930, about 5% of the total economy, has risen to be over 40% of the total U.S. economy (and far too high in many other countries). Essentially, an economy based on trading bubble-gum cards. (do they even still sell bubble gum with the sports cards?)

I recall back in '98, during the election hoopla, the observation that the economic boom hadn't really affected the majority of ordinary working Americans, who didn't feel particularly wealthier, despite the rising GDP, and the almost-balanced budget, and all the good-news economics of the time.\

All part of the mythic story that we've been telling ourselves that you've been exploring all year long.

6/21/12, 9:25 AM

phil harris said...

This piece from UK Guardian covers some interesting people in Athens, where business is definitely contracting. One interesting fellow though is busy trying to connect people with local farms for direct delivery. Each rents part of a field and buys the produce thereof, I think.

http://www.guardian.co.uk/world/2012/jun/19/the-agony-of-athens

All that investment in houses (‘suburbs’ mostly), office-towers and transport across swathes of Europe - one does wonder what it is actually doing for a living.

best

Phil

6/21/12, 9:25 AM

Dethe Elza said...

Also, at least in the part of the economy I see, most projects are at a drastically smaller scale than they were even 5-10 years ago, so even highly profitable projects (as % of investment) would not have the high levels of returns (in total dollars) to make it worth investing (and managing that investment).

Finally, more and more of the economy is moving off the books, which makes it harder to invest (at least publicly) in.

And of course, as others have already pointed out, there are the ongoing culture war / post-oil / heavy weather / environmental destruction collapses going on simultaneously.

A catastrophic collapse here, a devastation there, pretty soon it adds up to real trouble.

6/21/12, 9:28 AM

Dethe Elza said...

6/21/12, 9:32 AM

Michael Petro said...

Mr. Roboto beat me to it, but allow me to restate (forgive us if we enjoy the game of second-guessing your columns):

The volume of oil-yet-to-be-disinterred gave President Roosevelt's New Dealing a credibility for infrastructure investing that is, shall we say, a hard sell these days. This is the real reason that Keynesian stimulus (outside of suicidal military spending) is a non-starter.

All bridges are now "bridges to nowhere."

6/21/12, 9:33 AM

Kieran O'Neill said...

Or, in other words, there are two definitions of "profitability". The first is at the level of a business -- whether or not it can pay its employees a living wage, and give a modest dividend to its investors. The second is whether a business grows ad infinatum, which clearly not every business can do, and which most probably wouldn't want to do. I think it's the latter definition of profitability that we are seeing go away.

For that matter, I suspect that much of the growth we do see in share prices comes either from speculation, or from derivatives. I say derivatives because, as I mentioned above, most big corporations (in all industries, not just financial) supplement their balance sheets with shares in other big corporations. And in the middle of this tangled web, in a nebulous and dark hole, sit derivatives like a giant spider. I have a nasty feeling that a good part of most big corporations' balance sheets trace back to fictitious capital created by the financial corporations.

Anyway, the question raised by all this, and by your post, is "how do we keep an economy functioning without growth?"

6/21/12, 9:34 AM

parus said...

I'm in Finland -- we're supposedly doing "well" (in terms of credit rating anyway -- we still have more national debt than we can ever hope to pay back, but who doesn't these days?), and there's basically nothing but credit crisis in the news.

There's been opposition to the bailouts from the start, about fifty-fifty "why do we have to bail out those lazy Greeks who can't manage their money?" and "why do we have to we have to prop up French and German banks?". It's encouraging that it's not just about the former, I guess, though I can see the poor Greeks being the butt of jokes about poor financial sense for generations to come.

Thanks to local opposition politicians, discussion about the national and international effects of a Finnish (and around the time of the first round of elections, Greek) secession from the Euro has been on the table here, basically from the start, and expert opinions are all over the board, from "others would follow suit and the whole thing would unravel" to "no one would care". No one knows what would, or will, happen. I'd expect this discussion to gain steam, as we seem to be moving towards jointly backed debt and an European Federation, with even Germany coming around.

The assurances from government politicians that it'll all be over soon are getting pretty rare, and the tones when asked, sombre, though the assumption still seems to be that eventually economic growth will kick in again, and bail everyone out once and for all. We're just trying to keep our heads above water until then.

It seems Greece missed their chance to vote for politicians opposed to the austerity measures imposed on them, which is understandable though shortsighted -- being kicked out of the Euro seemed like a likely outcome (though I wonder how likely, in practice), and the Greeks no doubt still remember the days before cheap loans bought by the common currency. It's interesting to ponder what things will look like the next time they get to vote.

6/21/12, 9:53 AM

Odin's Raven said...

http://lewrockwell.com/orig13/perkins1.1.1.html

6/21/12, 11:57 AM

shtove said...

I've been following it since early 2007 and am increasingly convinced that economic contraction is chronic and unavoidable.

I recommend Michael Pettis - prescient analyst of China's predicament.

On a bright note - it's heartening to be able to read Pettis and JMG just by clicking the mouse.

(not sure if first post went to moderation)

6/21/12, 12:11 PM

Mean Mr Mustard said...

German overseas colonisation –

http://www.telegraph.co.uk/news/worldnews/europe/germany/5925614/German-holidaymakers-to-pre-book-sun-loungers.html

Parisienne walkways –

http://www.nytimes.com/1994/07/15/world/as-germans-parade-in-paris-some-cheer-a-few-are-chilled.html?pagewanted=all&src=pm

M. Moutarde

6/21/12, 1:56 PM

John Michael Greer said...

Thijs, there's your psychology of previous investment -- I own the stock, therefore it must be worth more than the market thinks. Plenty of people will ride that one straight down into penury. Glad to hear your parents have their heads on straight!

Guten abend, Herr Mustard! Since the Germans already got their victory parade in Paris in 1940, I suppose we can treat reserved beach loungers as foreign colonies and grant Germany the complete fulfillment of its 1914 aims. I wonder if they'll be celebrating that in two more years.

CGP, life forms will by and large expand into any niche that offers them an opportunity to survive and thrive, whether or not the moral standing of that niche appeals to us. That rule applies to human beings as much as any other life form, and shows itself in every social and economic system ever created. There are ways to minimize that sort of misbehavior, but they all start from the recognition that a fair number of people will be greedy and exploitive if they think they can get away with it.

Justin, I got a review copy but haven't had time to read it yet.

Jim, it's a great idea, and would take a violent revolution to put into place in the US. Remember that one person's debt is another person's investment; by eliminating 90% of debt you strip the rentier class of 90% of its income.

Jennifer, I'd love to see that figure, too, but getting it will take some doing! As for "squared" et al., you've read John Kenneth Galbraith, I hope? He explains somewhere that "financial innovation" always refers to some variation on the same handful of bad ideas, all of which involve exponential functions of one kind or another.

GuRan, no argument there! Lawson's got a good handle on things; thanks for the link.

Castus, it's going to collapse, in our lifetimes. The only questions are when, how suddenly, and with how much collateral damage.

Jason, that commercial's clever; what the folks from Coca-Cola are forgetting is that those same newspapers will presently be used to light torches for the mob, with pitchforks, who come for them.

Xhmko, bingo! It's always easier to pursue expensive investments in infrastructure if you're pumping wealth out of other countries.

Alex, haven't read it yet.

6/21/12, 2:54 PM

John Michael Greer said...

RPC, makes sense to me! Still, you won't get any mainstream politician in the US thinking in those terms -- not for another decade, at least.

Xhmko, that's a good one.

Ruddite, thank you!

Jean-Vivien, interesting question. My guess is that the bureaucrats in Brussels, being hopelessly detached from the real world, have loaded the grants down with so much paperwork that it's not worth the while of the supposed beneficiaries to bother.

Twilight, as I see it, the housing boom was mostly a speculative boom in the tertiary economy, and building houses was not much more relevant to the bubble than printing stock certificates is to a stock market bubble: it's just a matter of providing the material object upon which the fantasies rest.

Yupped, good. What's causing that distortion is the inflated value of money relative to human labor, which is in turn an effect of the impact of fossil fuel-based energy on the economy. When the total value present in the economy is what can be produced by human and animal muscle, with modest additional inputs from sun, wind and water, a day's hard work is going to be worth a good deal more.

Revenge, fascinating. That's what I would have expected.

Thomas, since I'm not an entrepreneur -- in fact, I'm a lousy businessman -- I'm not at all sure I would have anything useful to say. That might be an interesting subject for a blog post by somebody else who has the necessary experience.

K&C, as far as I can tell, yes, it was pretty much coincidental -- bad financial practices on the one hand, bad farming practices and a wicked twist to the climate on the other.

6/21/12, 3:11 PM

Robert said...

6/21/12, 3:59 PM

Guilherme de Baskerville said...

Most of the countries that end up on the receiving end of IMF "bailouts" did a lot of bad decisions for a long time to end up on that position. Not saying that the process itself doesn't ammount to sending hired thugs to break someone's knee cap if they don't pay what they owe. But the thing is, for a predatory lending situation to develop, there must exist both sides: people with too much money willing to "invest" in dodgy projects and send the collection gang afterwards, but also people willing to borrow far more money then they could possibly repay.

The greeks enjoyed a decade and half of living well above their means, dodging taxes and creating a humungous government bureacracy, and now it's all the faulf of the evil germans...

The political systems of the countries that end up doing these heavy borrowings are, usually, very mismatched affairs that end up using this borrowing ability to bribe their population for some time. It usally blows in their face, eventually, and takes the country down with it.

OTOH, I'm deeply suspicious of those aforementioned popular politicians and the people who vote them in. We had a similar situation here in Brazil, but unlike Argentina we actually swallowed the IMF pill, and thanks to some hard-work and a lot of luck on the commodity markets, we were actually able to pay off the loans and THEN told the IMF to get lost. The thing is, we had radical leftists politicians here yelling that all brazillian problems were caused by the evil IMF bankster and if we repelled the national debt things would magically improve overnight and brazillian standarts of living would be "first world". This is just another manifestation of the theme you so repeatedly expose here, of people believing in anything that exempts them of personal blame for whatever problems exist and wish "magical solutions" exists to their problems.

I read a lot of things about Greece that mention a similar attitude cropping up there, specially among the Athens youth, a sense of entitlement that is lashing against the people (IMF, Eurobank, germans, etc) who are keeping THEM from their rightful place in the sun. I read of this guy, from a hardcore marxist party, who had no job at 32 or somesuch, lived with his parents and, having procured an education in some artistic field (I think literature or something) and blamed the lack of jobs on everyone but not on his choice of an eminently un-marketeable choice of profession outside of government (I remember the only viable work for him would be as a college professor, which in Greece, like in most of Europe, is predominantly a state-sponsored affair).

6/21/12, 4:15 PM

Guilherme de Baskerville said...

Now we have a lot of lawyers, psychologists, literature majors, sociologists, economists and a vast array of other such professions, if we can call them that, and very little pratical skills. Here, a lot of them hang on government jobs, or office work, but that's a source that's going to diminish rapidly in the not-too-distant future. I do have high hopes for the generation after mine, people in their teens and early 20's now. While perhaps more fractured in terms of culture and goals, a lot of them seem more willing to, you know, work.

6/21/12, 4:16 PM

Steven said...

What happens in the stock markets of the world and on Wall Street is equivalent to black magic. Considering your skill in white magic, I would enjoy your perspective on that claim.

-Steven

6/21/12, 5:43 PM

Doctor Westchester said...

I think that the concept of wealth pumps is a one of great explanatory power. I don’t know whether you originated the delightfully clear version of the concept that you use or not, but it is an excellent summary of ideas and concepts that are usually either half hidden or totally submerged in almost anything we read or hear about finance, economics or politics.

One thing that you might expand on is the use of wealth pumps within our country. For example, many people know how there is apparently a net transfer of federal tax dollars from the “blue” states to the “red” states. Once, I assume that this just was what it appeared to be on the surface, where the more affluent states subsidizing the poorer ones. However, I realize that this might be more equivalent to what foreign aid to places like Africa is. Despite the foaming at the mouth of the right to this liberal bleeding heart generosity, I now consider our efforts at foreign aid to be strictly more a maintenance cost for us, i.e. a little lubrication to keep the wealth pumps going.

Finally, an observation that goes more with last week’s post. This last weekend I was at a major local environmental festival manning our Transition booth. During a lull I was talking with a friend who helping to (wo)man the booth also. She used to be involved in the local New Age movement. I have no personal experience with it, but her experience mirrored what was expressed in your last post quite closely. The movement seemed to her to be catering to “spoiled rich people”. She wondered how they could think that if something happened in December 2012 it would be a great awakening of human consciousness, instead of an apocalypse event others claim. It was fun to explain that a “great awakening of human consciousness” is really very much an apocalyptic event as well. She was delighted to hear of your idea of that day in December becoming “Nothing Happened Day”. She made a very enlightened comment about what might happen if we encountered people with “higher levels” of consciousness. Compared to Europeans, the native people of the new world appeared to be far more ecotechnically advanced on average. While there were many European invaders who tried to go “native”, we all know what the overall response was to what was left of the original inhabitants of this continent.

6/21/12, 6:36 PM

Lauren said...

On a personal note, I was finally able to break through and make an "alt-transport trip" for the 250 mile trip to visit an elderly relative. Minimal car travel followed by public bus transport, Greyhound bus, walking, commuter train. Reminded me of travels abroad and it's something I've needed to do to put travel in its true place: it's time consuming but can be done with patience and pleasure, rather than the need for speed and a denial of how resource-intensive and wasteful much of it usually is.

6/21/12, 6:46 PM

Richard Larson said...

The world economy would not be running on fumes if the credit generated by issuing fiat would not have been made available.

Bankable projects (oil drilling/mining/mega farms/ect) would have been denied with a limited supply of credit using Gold and/or silver as money.

You either want the economy to grow us out of resources, or you want a sustainable economy which must limit credit and limit the build-up of industry/government/finance.

The end of the cycle of credit as money is always resources in decline, no bankable projects as you put it, except now, it is far more than just soil.

6/21/12, 7:41 PM

Kurt Cagle said...

I like coming here. The essays (and often the comments) are invariably thought provoking, something that's all too rare these days.

I have to wonder the degree to which automation plays a part in all of this as well? A couple cases in point - I was recently at a Sears to by a refrigerator after ours had finally died. The saleswoman there had an iPad with an application giving her detailed access to information about all of the appliances they sold, complete with an integrated card reader for handling the purchase literally on the spot. I estimated that this one sales person could effectively now manage what had been the work of three or four people - a couple of floor salespeople, a cashier, and at least one delivery management person, since fulfillment necessitated only taking a barcode receipt to a pick up area and waiting for a staff person to bring the machine out - complete with an estimated time.

At the company I am currently consulting for (an "integration contractor" doing work for the ObamaCare project) the IT dept has introduced a printing system where you send your job out to a pool of printers, swipe your badge at the printer closest to your meeting, , and get the output there even if you didn't send it there initially. While it has some definite upsides - you end up being able to monitor traffic and so better plan where more paper is needed rather than overbuying for every printer, but that reduction means less paper sold, means less overall maintenance costs, cuts down on the need to have one or more people dedicating much of their workday to servicing these printers, and so forth.

Take this phenomenon in the aggregate, and one thing that becomes clear is that the economy shrinks. You need fewer people, albeit more specialized ones, to run things. You reduce costs, but most of that cost reduction gets transferred not to higher paychecks, but to greater dividend payments (transference to the rentier class).

Most young software developers today are writing apps for smart phones and tablets, with a significant proportion of that being productivity apps. A few people, early on, made a killing in that space, but software niches fill quickly, and there's already an observable long tail manifesting.

Additive printers (3d printers) are probably the next big thing, but even there, the effect of such printers is already being felt in the marketplace. The price of production for specific components of commercial goods via additive printers has now dropped low enough to make them cost effective compared large scale mass production, which itself has already been heavily automated. Yet once again, you have customized microproduction dismantling the near automated factories, you have less physical raw goods being moved around, far less need for management, physical laborers or support staff.

Certainly, the geopolitical aspects (and the shenanigans of the financial class) play their role, but I personally believe that is our society's inability to grapple with even the seemingly "benign" aspects of our technology that is playing a big part in the present "crisis". The major economies of the world today were built around the marshaling of energy resources, industrialization (typically with the military doing a lot of the driving), centralized marketing, advertising and anaylsis, command and control management styles and the physical transportation and housing infrastructure that these require. Yet those same economies are now being undermined by the successor technologies that are shifting the balance towards fewer cars on the roads, the ability to work remotely (with all that comes with that), the ability to move in and out of networks transparently, and mass customization, where a comparatively small number of people with automated tools and libraries can effectively service a far larger population. Thoughts?

6/21/12, 8:23 PM

xhmko said...

You may not be able to turn a profit selling weed, but it is easier to grow your own than to print money, and if one thing is true in some circles, it’s that weed will serve as currency any day of the week, whether it be to pay builders, trade for food, or get a massage. It won’t buy you out of prison though, so be careful.

@ Kieran O'Neill

"how do we keep an economy functioning without growth?"

The same way that everyone, everywhere has done it for most of human history. By living within your means, and not expecting the universe to just fulfill every whim and fancy that comes a societies way. It’s about accepting limits. Money does not grow on trees, after all: you have to cut them down first.

6/22/12, 3:15 AM

xhmko said...

http://betternature.wordpress.com/2012/06/19/12-year-old-explains/

6/22/12, 4:03 AM

jean-vivien said...

actually it seems to me that there is plenty of new "bankable projects" to invest in. Except that we have to redefine two notions,

1.- what we invest

2.- what we can expect in return

We need to invest time, effort, skillbuilding... and the returns would be social, and material as well but much less profligate than the material returns obtained from burning cheap energy.

It seems to me that magic could be a nifty tool in harnessing human investment. I noticed that it is easier to work when your imagination is stimulated, for example, or when you are mentally ready to accept (when you are "initiated" to) the road ahead.

One of the perceived achievements of the postmodern mindset is that humans do no longer need rituals to structure their inner worlds. Especially after going through all kinds of authoritarian regimes which relied on the power of ritual enforced through sheer brute force.

However there are still plenty of hidden rituals that pervade our consumer lifestyle.

Rediscovering more useful forms of rituals will be a big part in undertaking the new forms of investments we desperately need.

The world is full of bankable projects to support the individuals and their communities. When it comes to supporting a complex industrial economy, now that is another matter.

6/22/12, 4:26 AM

Cherokee Organics said...

Happy winter (oops, summer) solstice!

Our winter solstice has been exciting to say the least.

Tuesday night an earthquake measuring 5.3 on the richter scale east of me but still clearly felt over dinner. It was the strongest for Victoria in 109 years.

On the same night I saw the coldest temperature in the valley below that I'd seen hereabouts of -2 degrees Celsius (28.4 Fahrenheit)

Then Wednesday night gale force winds with gusts of well over 100km/h (about 60m/h).

Then Thursday (winter solstice) it rained for the entire 24 hours and I recorded 78mm (a bit over 3 inches) in the rain gauge.

Just another normal week for the mountains in the south east Australia! Actually, I seriously hope I don't get too many weeks like this one.

Great post too. There's something tickling the back of my mind about it, especially the mention of economic fundamentals and I think it has to do with the dialogue that we were having last week about manufacturing. I haven't collected my thoughts yet.

I had an awful choice to make tonight too. I was at a second hand book shop and had to choose between purchasing: William Gibsons, "Decline and Fall of the Roman Empire (it had only 28 of the original 71 chapters)" and Jarred Diamonds, "Collapse". Which would you have chosen?

Regards

Chris

6/22/12, 5:15 AM

Kieran O'Neill said...

And re-imagining society and retelling the stories of our civilisation is certainly part of our task. The short fiction anthology, as well as the Dark Mountain Project, are some attempts at that.

6/22/12, 9:16 AM

Ceworthe said...

6/22/12, 9:32 AM

DeAnander said...

So now it's *our* mountain tops they are blowing up to get at the coal, *our* rivers and aquifers tainted with fracking chemicals, *our* shrimp fishery shut down in the GoM... not "just" some distant spot like the island of Bougainville [highly recommend the doco "Evergreen Island" btw, about the heroic resistance of the Bougainvilleans to a bit copper mine and the poisoning of their primary river]. Now it's *our* pension plans, etc. that they are looting, *our* bridges rusting away and libraries being closed, *our* kids not getting basic health care, it's *us* that they are gradually, inexorably immiserating instead of -- no, as well as -- a bunch of South American indios and paisanos, or Vietnamese rice farmers, etc.

Their bad behaviour was further away back then. Now the wavefront of force and fraud is collapsing inward.

In a way it's almost a good thing. We all benefited by all that force and fraud, all those decades. We reaped the bennies but didn't see the costs. Now we're seeing the costs (of infinite-growth industrial capitalism, of growth industrialism generally). Maybe this might, eventually, make us rethink the whole enchilada?

But I'm still hearing people chattering happily at potlucks and at the postal counter about their recent or imminent airplane vacation to this or that remote spot. Even as the Fraser River passes flood stage and evacuations are in progres... So I guess the penny hasn't dropped yet, the dots are not yet connected.

6/22/12, 10:00 AM

jollyreaper said...

There's four stages of economy:

1. Extraction and production of raw materials (mining, farming, etc.)

2. Manufacturing (with all the associated industries)

3. Services

4. R&D, education, and such is generally lumped here.

Depending on which way the country leans on the political spectrum, big business or big government will have control over those stages.

The stuff in stage 3 can be pretty important since that includes everything from doctors to auto mechanics but you can't run an economy solely on services. And with the finance sector gobbling up everything in all importance, it's just crazy. Facebook getting valued at a bazillionty dollars isn't a sign that it's worth something, it's a sign that we don't know how to value anything.

I think the whole rentier thing is the sticking point. They're living off of interest and producing nothing. In order to keep making their necessary returns, the economy has to keep growing and growing. A steady-state economy is going to see their capital dwindle.

I've got this sense that our entire economic faith is going to go through a period of obliteration and replacement much in the way that our comfortable Christian world view (divine creation 6,000 years ago, Earth the center of the universe, every animal made and named in the Garden) was humiliatingly stripped naked in public and shamed.

Well, maybe not quite a death of religious faith, more like the driving out of embarrassingly wrong and ignorant practices like blood-letting, witch burnings and playing the lottery.

6/22/12, 10:58 AM

Bruce Wilder said...

The key problem was in the distribution of income, and the inability to allow suitable adjustments, is what resulted in the seizure in the financial system. Central bankers, like Bernanke, want to prevent the seizure, sure; but they also labor to prevent the adjustment to income distribution, which would undermine the rentier class. So far, the central bankers have prevented the seizure, but the concentration of income, and the related political domination by a class of people dependent on the old economy, just "kicks the can down the road". Increasingly rapid disinvesment funds the appearance of superior returns, crowding out the possibility of public and private investment in the future.

6/22/12, 11:12 AM

Alex said...

Incidentially even if the government doesn't its possible to invest in this sort of thing on a much smaller scale with as little as £50 in the UK (and I assume also in the US), as many new projects issue loanstock or bonds to borrow money from the community.

6/22/12, 3:11 PM

Alex said...

There's already some interesting things going on in greece with regard to local/regional parallel currency: http://www.pri.org/stories/business/economic-security/small-greek-community-turns-to-local-currency-as-economy-struggles-9442.html

At the same time, I also think there will be more potential in future for a more global protest/political currency which could be traded beyond national borders, but outside of the control of large corporations and banks.

6/22/12, 3:23 PM

Lance Michael Foster said...

6/22/12, 6:12 PM

Doctor Westchester said...

Concerning "well-behaved" elites - I should haved added "toward us middle and working class Americans - more often than before or since".

6/22/12, 6:45 PM

ando said...

Sure do appreciate the time you take to illuminate tbe financial aspect of collapse.

Speaking of collapse. In this blog and the comments it has been mentioned that collapse will be gradual and we are already in the midst of it. An example I noticed,today. They are starting to use the term drought here in St. Louis. No rain in weeks. Used to be the sprinklers would be going full blast to support the Better Home and Gardens lawns. As I walked around today, I saw many dormant lawns and no sprinkers. The economy has started to make that prohibitive.

namaste,

ando

6/22/12, 7:02 PM

Thomas Daulton said...

In regard to whether Germans [or Americans] are "to blame" fot the Wealth Pump: I'd suggest a more optimistic view... I propose we think of the Wealth Pump itself as a "relic of an outmoded morality" in much the same way we think of slavery, the Death penalty, or hereditary autocratic kings, and so on. Had you asked a European peasant 500 years ago why he tolerated the whims of some inbred nut with the power of capital punishment, he would likely have said that the King's authority provided moral strength and brought the favor of God on the land. After a few hundred years of trial, we realize today that those moral claims simply don't hold up. I'd like to think "homo economicus", the so-called Rational Actor interested only in his personal material gain, is also failing its real-world test, and the idea will soon (in a historic sense) pass from the realm of the socially tolerable. The Wealth Pump is nothing but the Rational Actor writ large, as a country. The younger people I know who understand the Wealth Pump tend to want to reject its benefits, out of disgust. (Their actual success rate at giving up the material benefits, lags behind their intent, but I think it will catch up.) Someday soon we will look back on it as a "mistake" our forefathers made, which we will have grown beyond.

6/22/12, 8:34 PM

Ceworthe said...

6/22/12, 10:11 PM

Cherokee Organics said...

I'm surprised that no one has mentioned that money is actually a magical symbol. It has no other inherent value of its own.

As a society, we give that symbol value (and hence power) because of an unacknowledged and mutually binding social contract. On a daily basis we exchange things of real value, like our work time for these symbols. We then use these newly acquired symbols to exchange with others for things of real value such as food.

However, the whole system relies on trust. The system operates between individuals, companies, governments, countries etc. It's big.

One of the real risks that governments take on board when they begin printing these symbols (sorry, quantitative easing or magicking the symbols into existence) is that they devalue the symbols already held by the population. The population still may have a large number of symbols and feel generally well off, but as trust in the system is broken down those symbols buy less than previous times.

Even worse is when one country holds a large number of those symbols from another country. The other country holding the large number of symbols will want to offload them like hot cakes whilst there are still suckers willing to take those symbols in exchange for items of real value.

So, we may find ourselves living in a country that predominantly has a finances and services focus and has turned its back on its historical manufacturing tradition. This is OK as long as the world keeps up the pretence of the value the symbols in use. But, if in the very likely scenario, the world decides that those values are worth less than previously, then the person living in that country will not have access to the imported manufactured goodies and energy that they are currently enjoying.

The other real risk is that, unfortunately trust can be broken quite quickly and without notice. I think a number of people think that a manufacturing base can be built quite quickly, but this is not the case without access to large amounts of cheap energy.

If you read the reports coming out of Greece, then you will notice that trust has been severely eroded and companies are not supplying things such as basic medical supplies to hospitals. Those hospitals are in turn not supplying services to patients, all because everyone fears not getting paid. This is what a break down in trust looks like and it ain’t pretty.

Regards

Chris

6/23/12, 2:18 AM

dltrammel said...

<a href="http://www.cbsnews.com/8301-215_162-57458911/obamas-carrying-out-dick-cheneys-energy-plan/"

on the US government's rational behind its current (and past) energy policy. Interesting read because it gives you an idea of how the government may proceed in the future.

6/23/12, 2:31 AM

Tony Weddle said...

Can you shed some light on how Japan has avoided collapse, if it's been in recession for 22 years? Isn't growth an absolute requirement of an economy with a debt based money system? I realise that government debt is enormous so how come it's not in a worse apparent position than Greece and many other developed nations?

6/23/12, 3:38 AM

integral yeshe said...

6/23/12, 4:30 AM

Chris said...

This was in relation to Australia's economy. It has long been a tradition in this country for employers to pay compulsory Superannuation on behalf of each employee. There's no choice, it's mandatory.

It was designed to give people money for their retirement. Eventually the government admitted Superannuation would replace the old-age pension it currently pays. Then another Govt (different side of politics) decided to extend the age when you were allowed to retire - or more aptly put, when the Super Fund released your lifetime of Super payments.

That same Govt, also brought in new policy, whereby if you made a personal contribution to your own Super, they would match it! You could also use those voluntary payments as a tax concession.

Our country's economy as been admired for it's ability to weather the GEC, but to put it another way, we have a stack of investment money tied into the stock market for Superannuation. And it's compulsory. It's also not guaranteed, so if a private company which invests your Super goes into receivership, you may not see a dime. It's already happened to an unlucky few thousand!

China also has a lot of ownership in mining and manufacturing in our country. Can you see where this is going? I bet you can.

I refuse to make voluntary Super payments because there's no guarantee I'll see my Super investments mature. I could do more with that money now - pay down our mortgage and build infrastructure to manage our property. I have long predicted I wouldn't see my Super before the GEC, simply because our Socialist side of Govt has a habit of making grand claims on behalf of the population (caring for the elderly, children and workers) yet habitually uses the money generated on what we call, "pork barrelling". In other words, giving government and their mates, access to a lot of money they wouldn't otherwise have.

I have been suspicious of this same Socialist Govt when they decided to bring in a new "Carbon Tax", forcing businesses to pay the Govt money for a so-called "cost to the future". The Superannuation fund was supposed to spare future tax-payers the burden of paying old-age pensions.

It means a lot more money is presently being generated to keep the debt sharks away. Who needs another country to fleece the occupants, when the Govt can do it quite well and call it our prosperity for the future.

The other side of politics makes no apologies either (it's their platform) with very favourable Govt policy directly to Corporations for prosperity. Either way, we are the country with the best economic credentials through the GEC, to "talk" other industrial nations around to handing themselves over to speculative prosperity too.

We all have a stake in helping each generation and that's future - as it has been for past generations before the industrial economy. They've now turned "tax" into a belief we are released of the burden of the elderly, young and sick. Yet every generation who pays this magical tax, is always left with the burden regardless. Ask anyone who hasn't met the criteria for a government payment, or to have their funds released early.

We're not old enough to retire, not sick or poor enough to claim a Health Care Card for reduced medical. We are not investors in the markets so we don't get a lot of tax concessions. All these taxes are paid for a perceived benefit, but very few can claim. That's how our Govt can say we are prosperous as a nation, and it's the model the world currently admires in the aftermath of the GEC.

The burden is still ours however, and always will be, to care for members in our communities. We have a lot of catching up to do with reality, when the money supply disappears.

6/23/12, 3:50 PM

Cherokee Organics said...

I wouldn't really consider Japan to be an economic success. At present the debt to GDP ratio is something like 200% - one of the highest ratios in the industrial world.

On the other hand, most of this debt is held by the Japanese so they have taken on their own risk and this gives them a level of stability not matched by the US. It also stops other countries from having leverage over that nation.

Hope that answers your questions re Japan.

Regards

Chris

6/23/12, 4:51 PM

jean-vivien said...

Hello Chris,

this was a very interesting comment. I just wonder, if money as a magickal symbol of trust disappears, what kinds of symbols might reappear ?

What are the dangers of losing that trust in money, and what are the positive outcomes that we, as individuals, should care for & encourage ?

6/23/12, 6:21 PM

Cherokee Organics said...

Thanks. I can think of a couple of things that might replace money:

- You could replace one set of magical symbols with another set of magical symbols. Don't believe me? How about in Greece, where perhaps Euro's will be soon converted to Drachma's. Will this fix the underlying structural problems? No. Will it buy some time for that society to get its house in order at a lower level of wealth and complexity? Maybe?

- Barter systems. You could swap peaches for honey. Trade doesn't have to be in monetary units. I wonder about barter because it is hard to determine the value of each item. Certainly haggling is a lost art in Western society. I'm happy to haggle though, if given the chance.

- Mutual obligations. Say for example, you could pay rent on land for a percentage of that lands output either in arrears or in advance. There is an element of risk on both parties in this arrangement but is usually quite good for experienced people.

- Indenture. You could sell your future labour output for lodging and food. This happens now, however we call them interns. It's actually a good system in that the person gets training and experience, plus their family (ie. the indentured person’s family) don't have to feed or shelter them.

The real danger in losing trust in any societies symbols is that the individuals in society become desperate and settle for any leader strong enough to maintain (or be convincing enough to promise to maintain) some sort of order.

Regards

Chris

6/24/12, 3:23 AM

Cherokee Organics said...

Compulsory super is not too bad. The worst that can be said about the unintended outcomes of the legislation is that it favours men over women, because it accrues along with employer salary payments. Stay at home mums don't accrue super and on average women are paid less than men (this is a fact and not a situation that I agree with).

You raise some interesting points, but I think historically the government expected us all to work till retirement age and then drop dead shortly thereafter. I don't think that the pension was originally intended to provide income support for people from their late 50's until their early 80's.

As a general observation, we probably all don't pay enough tax to support all of the government expenditures. The only way to address this is either to increase taxes or reduce spending or concessions (or print money as a wild alternative!).

Back to super though.

The co-contribution that you referred to is probably only an option taken up by the wealthy. If a person is broke, they're hardly likely to be able to put extra money into superannuation just so they can have matching government payments? It is bad policy.

Superannuation funds are generally cash flow positive so they are very unlikely to go into receivership. Generally if they are in trouble it is because of fraudulent actions on behalf of the trustees and they are generally prosecuted. These are audited entities, so this sort of shenanigans doesn't really go on for very long. I'm sorry for you if you've been personally caught up in some sort of mess. It is very rare though.