Last week’s post on the need to check our narratives against the evidence of history turned out to be rather more timely than I expected. Over the weekend, following hints and nods from the Fed that the current orgy of quantitative easing may not continue, stock and bond markets around the globe did a swan dive. In response, with the predictability of a well-oiled cuckoo clock, the usual claims that total economic collapse is imminent have begun to spread across the peak oil blogosphere.

It’s only the contemporary fixation on “perfect storms” of various imaginary kinds that leads so many people to forget that imperfect storms can cause quite a bit of damage all by themselves. Yet it’s the imperfect storms, the ones we can actually expect to get in the real world, that ought to feature in predictions of the future—if those predictions are meant to predict the future, that is, rather than serving as inkblots onto which to project emotionally charged fantasies, excuses for not abandoning unsustainable but comfortable lifestyles, or what have you.

As I write these words, the slump seems to have stabilized, but it’s a safe bet that if it resumes—and there’s reason to think that it will—the same claims will get plenty of air time, as they did during the last half dozen market slumps If that happens, it’s an equally safe bet that a year from now, those who made and circulated those predictions will once again have egg on their faces, and the peak oil movement will have suffered another own goal, inflicted by those who have forgotten that the ability to offer accurate predictions about an otherwise baffling future is one of the few things that gives the peak oil movement any claim on the attention of the rest of the world.

Mind you, worries about the state of the world economy are far from misplaced just now. In the wake of the 2008 crash, financial authorities in the US—first the Department of the Treasury, backed by Congressional appropriations, and then the Federal Reserve, backed by nothing but its own insistence that it had the right to spin the presses as enthusiastically as it wished—flooded markets in the US and overseas with a tsunami of money, in an attempt to forestall the contraction of the money supply that usually follows a market crash and ushers in a recession or worse. The theory behind that exercise was outlined by Ben Bernanke in his famous “helicopter speech” in 2002: keep the money supply from contracting in the wake of a market crash, if necessary by dumping money out of helicopters, and the economy will recover from the effects of the crash and return to robust growth in short order.

That theory was put to the test, and it failed. Five years after the 2008 crash, the global economy has not returned to robust growth. Across America and Europe, in the teeth of quantitative easing, hard times of a kind rarely seen since the Great Depression have become widespread. Official claims that happy days will be here again just as soon as everybody but the rich accepts one more round of belt-tightening (also a feature of the Great Depression, by the way) are increasingly hard to sustain in the face of the flat failure of current policies to bring anything but more poverty. Meanwhile, the form taken by quantitative easing in the present case—massive purchases of worthless securities by central banks—has national governments drowning in debt, central banks burdened with mountains of the kind of financial paper that makes junk bonds look secure, and no one better off except a financial industry that has become increasingly disconnected from political and economic realities.

Thus the boom is coming down. On the 18th of this month, Obama commented in a media interview that Bernanke had been at the Fed’s helm “longer than he wanted,” an unsubtle way of announcing that the chairman would not be appointed to a third term in 2014. Shortly thereafter, the Fed let it be known that the ongoing quantitative easing program would be tapered off toward the end of the year, and the general manager of the Bank of International Settlements (BIS), one of the core institutions of global finance, gave a speech noting that central banks had gone too far in spinning the presses, and risked problems as bad as the ones quantitative easing was supposed to cure.

Markets around the world panicked, and for good reason. Most of the cash from quantitative easing in the US and elsewhere got paid out to large banks, on the theory that it would go to borrowers and drive another round of economic growth. That didn’t happen, because borrowing at interest only makes sense when growth can be expected to exceed the interest rate. Whether it’s 18-year-olds taking out student loans to go to college, business owners issuing corporate paper to finance expansion, or what have you, the assumption is that the return on investment will be high enough to cover the cost of interest and still yield a profit. In the stagnant economy of the last five years, that assumption has not fared well, and where government guarantees didn’t distort the process—as happened with student loans in the US, for example—the result was a dearth of new loans, and thus a dearth of new economic activity.

Unused money in a bank’s coffers these days is about as secure as it is in the pocket of your average eight-year-old, though, and for most of the last five years, the world’s speculative markets were among the standard places for banks to go and spend it.

That helped drive a series of boomlets in various kinds of speculative paper, and pushed some market indices to all-time highs. The end of the quantitative easing gravy train very likely means the end of that process, and for an assortment of other fiscal gimmicks that have been surfing the waves of cheap money pouring out of the Fed and other central banks in recent years. A prolonged bear market is thus likely.

Could that bear market trigger a run on the investment banks that, under the cozy illusion that they’re still too big to fail, have become too arrogant to survive? Very possibly. The twilight of “Helicopter Ben” and his spin-the-presses policies also marks the end of the line for a coterie of economists and bankers, most of them associated with Goldman Sachs, who came to power after the 2008 crisis insisting that they knew how to fix the broken economy. They didn’t, and they are now in the process of discovering—as the neoconservatives found out before them—that while the American political class has almost limitless patience with corruption and venality, it has no tolerance at all for failure. I expect to see a fair number of prominent figures in the nation’s financial bureaucracies headed back to the same genteel obscurity that swallowed the neocons, and it’s by no means unlikely that Goldman Sachs or some other big financial firm may be allowed to crash and burn as part of the payback.

And beyond that? One way or another, the end of quantitative easing bids fair to trigger a wave of harsh economic readjustments, government defaults, corporate bankruptcies, and misery for all. An immense overhang of unpayable debt is going to have to be liquidated in one way or another, and there’s no way for that to happen without a lot of pain. That may well involve a recession harsh enough that the D-word will probably need to be pulled out of cold storage and used instead. Will the remaining scraps of democratic governance in Europe and America, and the increasingly fragile peace among the world’s military powers, survive several years of that? That’s a good question, to which history offers mostly unencouraging answers.

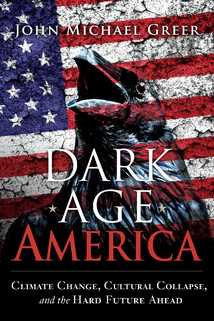

Still, these deeply troubling possibilities aren’t the things you’ll hear aired across the more apocalyptic end of the peak oil scene, if recent declines in global stock markets continue. Rather, if experience is any guide, we can expect a rehash of the claims that the next big economic crisis will cause a total implosion of global financial systems, leading to a credit collapse that will prevent farmers from buying seed for next year’s crops, groceries from stocking their shelves, factories from producing anything at all, and thus land us all plop in the middle of the Dark Ages in short order.

It’s here that the issue discussed in last week’s post becomes particularly relevant, because there’s a difference—a big one—between the imaginary cataclysms that fill so much space on the doomward end of the blogosphere and what actually happens. Financial history is full of markets that imploded, economies that plunged into recession and depression, currencies that became worthless, and all the other stage properties of current speculations concerning total economic collapse, and it also has quite detailed things to say about what followed each of these crises. Without too much trouble, given access to the internet or a decently stocked library, you can find out what happens when a highly centralized economic system comes apart at the seams, no matter what combination of factors do the deed. The difference between what actually happens and the whole range of current fantasies about instant doom can be summed up in a single phrase: negative feedback.

That’s the process by which a thermostat works: when the house gets cold, the furnace turns on and heats it back up; when the house gets too warm, the furnace shuts down and lets it cool off. Negative feedback is one of the basic properties of whole systems, and the more complex the system, the more subtle, powerful, and multilayered the negative feedback loops tend to be. The opposite process is positive feedback, and it’s extremely rare in the real world, because systems with positive feedback promptly destroy themselves—imagine a thermostat that responded to rising temperatures by heating things up further until the house burns down. Negative feedback, by contrast, is everywhere.

That’s not something you’ll see referenced in any of the current crop of fast-crash theories, whether those fixate on financial markets, global climate, or what have you. Nearly all those theories make sweeping claims about some set of hypothetical positive feedback loops, while systematically ignoring the existence of well-documented negative feedback loops, and dismissing the evidence of history.

The traditional cry of “But it’s different this time!” serves its usual function as an obstacle to understanding: no matter how many times a claim has failed in the past, and no matter how many times matters have failed to follow the predicted course, believers can always find some reason or other to insist that this time isn’t like all the others.

It happens that I’ve been doing plenty of thinking about negative feedback recently, because I’ve fielded yet another flurry of claims that my theory of catabolic collapse must be false because it doesn’t allow for the large-scale crises that we’re evidently about to experience. Mind you, I have no objection to having my theory critiqued, but it would be helpful if those who did so took the time to learn a little about the theory they think they’re critiquing. In point of fact—I encourage doubters to read a PDF of the original essay—the theory of catabolic collapse not only assumes but requires large-scale crises. What it explains is why those crises aren’t followed by a plunge into oblivion but by stabilization and partial recovery.

The reason is negative feedback. A civilization on the way down normally has much more capital—buildings, infrastructure, knowledge, population, and everything else a macroeconomist would put under this label—than it can afford to maintain. Crisis solves this problem by wrecking a great deal of excess capital, so that it no longer requires maintenance, and resources that had been maintaining it can be put to more immediate needs. In addition, much of the wrecked capital can be stripped for raw materials, cutting expenditures further. Since civilizations in decline are by and large desperately short of uncommitted resources, and are also normally squeezed by rising costs for resource extraction, both these windfalls make it possible for a crumbling society to buy time and stave off collapse for at least a little longer; that’s what drives the stairstep process of crisis, stabilization, partial recovery, and renewed crisis that shows up in the last centuries of every historically documented civilization.

That sequence is so reliable that Arnold Toynbee could argue, with no shortage of evidence, that there are usually three and a half rounds of it in the fall of any civilization—the last half-cycle being the final crisis from which the recovery is somebody else’s business. Our civilization, by the way, has already been through its first cycle, the global crisis of 1914-1954 that saw Europe stripped of its once-vast colonial empires and turned into a battleground between American and Russian successor states.

We’re just about due for the second, which will likely be at least as traumatic as the first; the third, if our civilization follows the usual pattern, should hit a battered and impoverished industrial world sometime in the 22nd century, and the final collapse will follow maybe fifty to a hundred years after that.

Now of course there are plenty of people these days insisting that industrial civilization can’t possibly take that long to fall, just as there are plenty of people who insist that it can’t fall at all. In both cases, the arguments normally rest on the blindness to negative feedback discussed above. Consider the currently popular notion, critiqued in one of last month’s posts, that humanity will go extinct by 2030 due to runaway climate change. The logic here follows the pattern I sketched out earlier—extreme claims about hypothetical positive feedback loops, combined with selective blindness to well-documented negative feedback loops that have put an end to greenhouse events in the past, propped up with the inevitable claim that the modest details that distinguish the present situation from similar events in the past mean that the lessons of the past don’t count.

Current rhetoric aside, greenhouse events driven by extremely rapid CO2 releases are anything but rare in Earth’s history. The usual culprits are large-scale volcanic releases of greenhouse gases, which boosted CO2 levels in the atmosphere up above 1200 ppm—that’s four times current levels—and thus drove what geologists, not normally an excitable bunch, call “super-greenhouse events.” If massive CO2 releases into the atmosphere were going to exterminate life on Earth, these would have done the trick—and super-greenhouse events have happened many times already, just within the small share of the planet’s history that geologists have enough evidence to study.

What stops it? Negative feedback. The most important of the many negative feedback loops that counter greenhouse events is the shutdown of the thermohaline circulation, the engine that drives the world’s ocean currents. The thermohaline circulation also puts oxygen into the deep oceans, and when it shuts down, you get an oceanic anoxic event. Ocean waters below 50 meters or so run out of oxygen and become incapable of supporting life, and the rain of carbon-rich organic materials from the sunlit levels of the ocean, which normally supports a galaxy of deepwater ecosystems, falls instead to the bottom of the sea, taking all its carbon with it. It’s an extremely effective way of sucking excess carbon out of the biosphere: around 70% of all known petroleum reserves, along with thick belts of carbon-rich black shale found over much of the world, were laid down in a handful of oceanic anoxic events in the Jurassic and Cretaceous periods.

Oceanographers aren’t sure yet of the mechanism that shuts off the thermohaline circulation, but it doesn’t require the steamy temperatures of the Mesozoic to do it. At least one massive oceanic anoxic event happened in the Ordovician period, in the middle of a glaciation, and there’s tolerably good evidence that a brief shutdown was responsible for the thousand-year-long Younger Dryas cold period at the end of the last ice age. Not that long ago, global warming researchers were warning about the possibility of a shutdown of the thermohaline circulation in the near future, and measurements of deepwater formation have not been encouraging to believers in business as usual.

Meanwhile, other patterns of negative feedback are already under way. Across much of the tropical world, increased CO2 levels in the atmosphere are helping to drive bush encroachment—the rapid spread of thorny shrubs and trees across former grasslands. Western media coverage so far has fixated on the plight of cheetahs—is there any environmental issue we can’t reduce to sentimentality about cute animals?—but the other side of the picture is that shrubs and trees soak up much more carbon than grasslands, and in many areas, the shrubs involved in bush encroachment make cattle raising impossible, cutting into another source of greenhouse gases. Meanwhile, the depletion of fossil fuels imposes its own form of negative feedback; as petroleum geologists have been pointing out for quite a while now,

there aren’t enough economically recoverable fossil fuels in the world to justify even the IPCC’s relatively unapocalyptic predictions of climate change.

Apply the same logic to the economic convulsions I mentioned earlier and the same results follow. The reason a financial collapse won’t result in bare grocery shelves, deserted factories, fallow fields, and mass death is, again, negative feedback. The world’s political, economic, and military officials have plenty of options for preventing such an outcome, most of them thoroughly tested in previous economic breakdowns, and so these officials aren’t exactly likely to respond to crisis by wringing their hands and saying, “Oh, whatever shall we do?”

For that matter, ordinary people caught in previous periods of extreme economic crisis have proven perfectly able to jerry-rig whatever arrangements might be necessary to stay fed and provided with other necessities.

Whether the crisis is contained by federal loan guarantees and bank nationalizations that keep farms, factories, and stores supplied with the credit they need, by the repudiation of debts and the issuance of a new currency, by martial law and the government seizure of unused acreage, or by ordinary citizens cobbling together new systems of exchange in a hurry, as happened in Argentina, Russia, and other places where the economy suddenly went to pieces, the crisis will be contained.

The negative feedback here is provided by the simple facts that people are willing to do almost anything to put food on the table, governments are willing to do even more to stay in power, and in hundreds of previous crises, their actions have proven more than sufficient to stop the positive feedback loops of economic crisis in their tracks, and stabilize the situation at some level.

None of this means the crisis will be easy to get through, nor does it mean that the world that emerges once the rubble stops bouncing and the dust settles will be anything like as prosperous, as comfortable, or as familiar as the one we have today. That’s true of all three of the situations I’ve sketched out in this post. While the next round of crisis along the arc of industrial civilization’s decline and fall will likely be over by 2070 of so, living through the interval between then and now will probably have more than a little in common with living through the First World War, the waves of political and social crises that followed it, the Great Depression, and the rise of fascism, followed by the Second World War and its aftermath—and this time the United States is unlikely to be sheltered from the worst impacts of crisis, as it was between 1914 and 1954.

In the same way, the negative feedback loops that counter greenhouse events in the Earth’s biosphere don’t prevent drastic climate swings, with all the agricultural problems and extreme weather events that those imply; they simply prevent those swings from going indefinitely, and impose reverse swings that could be just as damaging. If the thermohaline circulation shuts down, in particular, there’s a very real possibility that the world could be whipsawed by extreme weather in both directions—too hot for a few more decades, and then too cold for the next millennium—as happened around the beginning of the Younger Dryas period 12,800 years ago. Our species survived then, and on several other similar occasions, and the Earth as a whole has been through even more drastic climate shifts many times; still, it’s a sufficiently harsh prospect for those of us who may have to live through it that anything that can be done to prevent it is well worth doing.