Last week’s discussion of facts and values was not as much of a diversion from the main theme of the current sequence of posts here on

The Archdruid Report as it may have seemed. Every human society likes to think that its core cultural and intellectual projects, whatever those happen to be, are the be-all and end-all of human existence. As each society rounds out its trajectory through time with the normal process of decline and fall, in turn, its intellectuals face the dismaying experience of watching those projects fail, and betray the hopes so fondly confided to them.

It’s important not to underestimate the shattering force of this experience. The plays of Euripides offer cogent testimony of the despair felt by ancient Greek thinkers as their grand project of reducing the world to rational order dissolved in a chaos of competing ideologies and brutal warfare. Fast forward most of a millennium, and Augustine’s The City of God anatomized the comparable despair of Roman intellectuals at the failure of their dream of a civilized world at peace under the rule of law.

Skip another millennium and a bit, and the collapse of the imagined unity of Christendom into a welter of contending sects and warring nationalities had a similar impact on cultural productions of all kinds as the Middle Ages gave way to the era of the Reformation. No doubt when people a millennium or so from now assess the legacies of the twenty-first century, they’ll have no trouble tracing a similar tone of despair in our arts and literature, driven by the failure of science and technology to live up to the messianic fantasies of perpetual progress that have been loaded onto them since Francis Bacon’s time.

I’ve already discussed, in previous essays here, some of the reasons why such projects so reliably fail. To begin with, of course, the grand designs of intellectuals in a mature society normally presuppose access to the kind and scale of resources that such a society supplies to its more privileged inmates. When the resource needs of an intellectual project can no longer be met, it doesn’t matter how useful it would be if it could be pursued further, much less how closely aligned it might happen to be to somebody’s notion of the meaning and purpose of human existence.

Furthermore, as a society begins its one-way trip down the steep and slippery chute labeled “Decline and Fall,” and its ability to find and distribute resources starts to falter, its priorities necessarily shift. Triage becomes the order of the day, and projects that might ordinarily get funding end up out of luck so that more immediate needs can get as much of the available resource base as possible. A society’s core intellectual projects tend to face this fate a good deal sooner than other, more pragmatic concerns; when the barbarians are at the gates, one might say, funds that might otherwise be used to pay for schools of philosophy tend to get spent hiring soldiers instead.

Modern science, the core intellectual project of the contemporary industrial world, and technological complexification, its core cultural project, are as subject to these same two vulnerabilities as were the corresponding projects of other civilizations. Yes, I’m aware that this is a controversial claim, but I’d argue that it follows necessarily from the nature of both projects. Scientific research, like most things in life, is subject to the law of diminishing returns; what this means in practice is that the more research has been done in any field, the greater an investment is needed on average to make the next round of discoveries. Consider the difference between the absurdly cheap hardware that was used in the late 19th century to detect the electron and the fantastically expensive facility that had to be built to detect the Higgs boson; that’s the sort of shift in the cost-benefit ratio of research that I have in mind.

A civilization with ample resources and a thriving economy can afford to ignore the rising cost of research, and gamble that new discoveries will be valuable enough to cover the costs. A civilization facing resource shortages and economic contraction can’t. If the cost of new discoveries in particle physics continues to rise along the same curve that gave us the Higgs boson’s multibillion-Euro price tag, for example, the next round of experiments, or the one after that, could easily rise to the point that in an era of resource depletion, economic turmoil, and environmental payback, no consortium of nations on the planet will be able to spare the resources for the project. Even if the resources could theoretically be spared, furthermore, there will be many other projects begging for them, and it’s far from certain that another round of research into particle physics would be the best available option.

The project of technological complexification is even more vulnerable to the same effect. Though true believers in progress like to think of new technologies as replacements for older ones, it’s actually more common for new technologies to be layered over existing ones. Consider, as one example out of many, the US transportation grid, in which airlanes, freeways, railroads, local roads, and navigable waterways are all still in use, reflecting most of the history of transport on this continent from colonial times to the present. The more recent the transport mode, by and large, the more expensive it is to maintain and operate, and the exotic new transportation schemes floated in recent years are no exception to that rule.

Now factor in economic contraction and resource shortages. The most complex and expensive parts of the technostructure tend also to be the most prestigious and politically influential, and so the logical strategy of a phased withdrawal from unaffordable complexity—for example, shutting down airports and using the proceeds to make good some of the impact of decades of malign neglect on the nation’s rail network—is rarely if ever a politically viable option. As contraction accelerates, the available resources come to be distributed by way of a political free-for-all in which rational strategies for the future play no significant role. In such a setting, will new technological projects be able to get the kind of ample funding they’ve gotten in the past? Let’s be charitable and simply say that this isn’t likely.

Thus the end of the age of fossil-fueled extravagance means the coming of a period in which science and technology will have a very hard row to hoe, with each existing or proposed project having to compete for a slice of a shrinking pie of resources against many other equally urgent needs. That in itself would be a huge challenge. What makes it much worse is that many scientists, technologists, and their supporters in the lay community are currently behaving in ways that all but guarantee that when the resources are divided up, science and technology will draw the short sticks.

It has to be remembered that science and technology are social enterprises. They don’t happen by themselves in some sort of abstract space insulated from the grubby realities of human collective life. Laboratories, institutes, and university departments are social constructs, funded and supported by the wider society. That funding and support doesn’t happen by accident; it exists because the wider society believes that the labors of scientists and engineers will further its own collective goals and projects.

Historically speaking, it’s only in exceptional circumstances that something like scientific research gets as large a cut of a society’s total budget as they do today.

As recently as a century ago, the sciences received only a tiny fraction of the support they currently get; a modest number of university positions with limited resources provided most of what institutional backing the sciences got, and technological progress was largely a matter of individual inventors pursuing projects on their own nickel in their off hours—consider the Wright brothers, who carried out the research that led to the first successful airplane in between waiting on customers in their bicycle shop, and without benefit of research grants.

The transformation of scientific research and technological progress from the part-time activity of an enthusiastic fringe culture to its present role as a massively funded institutional process took place over the course of the twentieth century. Plenty of things drove that transformation, but among the critical factors were the successful efforts of scientists, engineers, and the patrons and publicists of science and technology to make a case for science and technology as forces for good in society, producing benefits that would someday be extended to all. In the boomtimes that followed the Second World War, it was arguably easier to make that case than it had ever been before, but it took a great deal of work—not merely propaganda, but actual changes in the way that scientists and engineers interacted with the public and met their concerns—to overcome the public wariness toward science and technology that made the mad scientist such a stock figure in the popular media of the time.

These days, the economic largesse that made it possible for the latest products of industry to reach most American households is increasingly a fading memory, and that’s made life a good deal more difficult for those who argue for science and technology as forces for good. Still, there’s another factor, which is the increasing failure of institutional science and technology to make that case in any way that matters.

Here’s a homely example. I have a friend who suffered from severe asthma. She was on four different asthma medications, each accompanied by its own bevy of nasty side effects, which more or less kept the asthma under control without curing it. After many years of this, she happened to learn that another health problem she had was associated with a dietary allergy, cut the offending food out of her diet, and was startled and delighted to find that her asthma cleared up as well.

After a year with no asthma symptoms, she went to her physician, who expressed surprise that she hadn’t had to come in for asthma treatment in the meantime. She explained what had happened. The doctor admitted that the role of that allergy as a cause of severe asthma was well known. When she asked the doctor why she hadn’t been told this, so she could make an informed decision, the only response she got was, and I quote, “We prefer to medicate for that condition.”

Most of the people I know have at least one such story to tell about their interactions with the medical industry, in which the convenience and profit of the industry took precedence over the well-being of the patient; no few have simply stopped going to physicians, since the side effects from the medications they received have been reliably worse than the illness they had when they went in. Since today’s mainstream medical industry makes so much of its scientific basis, the growing public unease with medicine splashes over onto science in general. For that matter, whenever some technology seems to be harming people, it’s a safe bet that somebody in a lab coat with a prestigious title will appear on the media insisting that everything’s all right; some of the time, the person in the lab coat is right, but it’s happened often enough that everything was not all right that the trust once reposed in scientific experts is getting noticeably threadbare these days.

Most of the people I know have at least one such story to tell about their interactions with the medical industry, in which the convenience and profit of the industry took precedence over the well-being of the patient; no few have simply stopped going to physicians, since the side effects from the medications they received have been reliably worse than the illness they had when they went in. Since today’s mainstream medical industry makes so much of its scientific basis, the growing public unease with medicine splashes over onto science in general. For that matter, whenever some technology seems to be harming people, it’s a safe bet that somebody in a lab coat with a prestigious title will appear on the media insisting that everything’s all right; some of the time, the person in the lab coat is right, but it’s happened often enough that everything was not all right that the trust once reposed in scientific experts is getting noticeably threadbare these days.  Public trust in scientists has taken a beating for several other reasons as well. I’ve discussed in previous posts here the way that the vagaries of scientific opinion concerning climate change have been erased from our collective memory by one side in the current climate debate. It’s probably necessary for me to reiterate here that I find the arguments for disastrous anthropogenic climate change far stronger than the arguments against it, and have discussed the likely consequences of our civilization’s maltreatment of the atmosphere repeatedly on this blog and in my books; the fact remains that in my teen years, in the 1970s and 1980s, scientific opinion was still sharply divided on the subject of future climates, and a significant number of experts believed that the descent into a new ice age was likely.

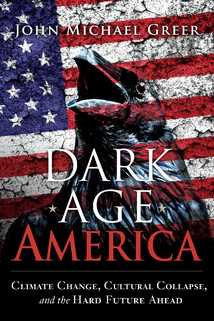

Public trust in scientists has taken a beating for several other reasons as well. I’ve discussed in previous posts here the way that the vagaries of scientific opinion concerning climate change have been erased from our collective memory by one side in the current climate debate. It’s probably necessary for me to reiterate here that I find the arguments for disastrous anthropogenic climate change far stronger than the arguments against it, and have discussed the likely consequences of our civilization’s maltreatment of the atmosphere repeatedly on this blog and in my books; the fact remains that in my teen years, in the 1970s and 1980s, scientific opinion was still sharply divided on the subject of future climates, and a significant number of experts believed that the descent into a new ice age was likely.  I’ve taken the time to find and post here the covers of some of the books I read in those days. The authors were by no means nonentities. Nigel Calder was a highly respected science writer and media personality. E.C. Pielou is still one of the most respected Canadian ecologists, and the book of hers shown here, After the Ice Age, is a brilliant ecological study that deserves close attention from anyone interested in how ecosystems respond to sudden climatic warming. Windsor Chorlton, the author of

Ice Ages, occupied a less exalted station in the food chain of science writers, but all the volumes in the Planet Earth

series were written in consultation with acknowledged experts and summarized the state of the art in the earth sciences at the time of publication.

I’ve taken the time to find and post here the covers of some of the books I read in those days. The authors were by no means nonentities. Nigel Calder was a highly respected science writer and media personality. E.C. Pielou is still one of the most respected Canadian ecologists, and the book of hers shown here, After the Ice Age, is a brilliant ecological study that deserves close attention from anyone interested in how ecosystems respond to sudden climatic warming. Windsor Chorlton, the author of

Ice Ages, occupied a less exalted station in the food chain of science writers, but all the volumes in the Planet Earth

series were written in consultation with acknowledged experts and summarized the state of the art in the earth sciences at the time of publication.  Since certain science fiction writers have been among the most vitriolic figures denouncing those who remember the warnings of an imminent ice age, I’ve also posted covers of two of my favorite science fiction novels from those days, which were both set in an ice age future. My younger readers may not remember Robert Silverbergand Poul Anderson; those who do will know that both of them were serious SF writers who paid close attention to the scientific thought of their time, and wrote about futures defined by an ice age at the time when this was still a legitimate scientific extrapolation

Since certain science fiction writers have been among the most vitriolic figures denouncing those who remember the warnings of an imminent ice age, I’ve also posted covers of two of my favorite science fiction novels from those days, which were both set in an ice age future. My younger readers may not remember Robert Silverbergand Poul Anderson; those who do will know that both of them were serious SF writers who paid close attention to the scientific thought of their time, and wrote about futures defined by an ice age at the time when this was still a legitimate scientific extrapolation These books exist. I still own copies of most of them, and any of my readers who takes the time to find one will discover, in each nonfiction volume, a thoughtfully developed argument suggesting that the earth would soon descend into a new ice age, and in each of the novels, a lively story set in a future shaped by the new ice age in question. Those arguments turned out to be wrong, no question; they were made by qualified experts, at a time

These books exist. I still own copies of most of them, and any of my readers who takes the time to find one will discover, in each nonfiction volume, a thoughtfully developed argument suggesting that the earth would soon descend into a new ice age, and in each of the novels, a lively story set in a future shaped by the new ice age in question. Those arguments turned out to be wrong, no question; they were made by qualified experts, at a time

It’s far from the only example of the same kind. Many of my readers will remember the days when all cholesterol was bad and polyunsaturated fats were good for you. Most of my readers will recall drugs that were introduced to the market with loud assurances of safety and efficacy, and then withdrawn in a hurry when those assurances turned out to be dead wrong. Those readers who are old enough may even remember when continental drift was being denounced as the last word in pseudoscience, a bit of history that a number of science writers these days claim never happened. Support for science depends on trust in scientists, and that’s become increasingly hard to maintain at a time when it’s unpleasantly easy to point to straightforward falsifications of the kind just outlined.

On top of all this, there’s the impact of the atheist movement on public debates concerning science. I hasten to say that I know quite a few atheists, and the great majority of them are decent, compassionate people who have no trouble accepting the fact that their beliefs aren’t shared by everyone around them. Unfortunately, the atheists who have managed to seize the public limelight too rarely merit description in those terms. Most of my readers will be wearily familiar with the sneering bullies who so often claim to speak for atheism these days; I can promise you that as the head of a small religious organization in a minority faith, I get to hear from them far too often for my taste.

Mind you, there’s a certain wry amusement in the way that the resulting disputes are playing out in contemporary culture. Even diehard atheists have begun to notice that whenever Richard Dawkins opens his mouth, a dozen people decide to give religion a second chance. Still, the dubious behavior of the “angry atheist” crowd affects the subject of this post at least as powerfully as it does the field of popular religion. A great many of today’s atheists claim the support of scientific materialism for their beliefs, and no small number of the most prominent figures in the atheist movement hold down day jobs as scientists or science educators. In the popular mind, as a result, these people, their beliefs, and their behavior are quite generally conflated with science as a whole.

Theimplications of all these factors are best explored by way of a simple thought experiment. Let’s say, dear reader, that you’re an ordinary American citizen. Over the last month, you’ve heard one scientific expert insist that the latest fashionable heart drug is safe and effective, while three of your drinking buddies have told you in detail about the ghastly side effects it gave them. You’ve heard another scientific expert denounce acupuncture as crackpot pseudoscience, while your Uncle Henry, who messed up his back in Iraq, got more relief from three visits to an acupuncturist than he got from six years of conventional treatment. You’ve heard still another scientific expert claim yet again that no qualified scientist ever said back in the 1970s that the world was headed for a new ice age, and you read the same books I did when you were in high school and know that the expert is either misinformed or lying. Finally, you’ve been on the receiving end of yet another diatribe by yet another atheist of the sneering-bully type mentioned earlier, who vilified your personal religious beliefs in terms that would probably count as hate speech in most other contexts, and used an assortment of claims about science to justify his views and excuse his behavior.

Given all this, will you vote for a candidate who says that you have to accept a cut in your standard of living in order to keep research laboratories and university science departments fully funded?

No, I didn’t think so.

In miniature, that’s the crisis faced by science as we move into the endgame of industrial civilization, just as comparable crises challenged Greek philosophy, Roman jurisprudence, and medieval theology in the endgames of their own societies. When a society assigns one of its core intellectual or cultural projects to a community of specialists, those specialists need to think, hard, about the way that their words and actions will come across to those outside that community. That’s important enough when the society is still in a phase of expansion; when it tips over its historic peak and begins the long road down, it becomes an absolute necessity—but it’s a necessity that, very often, the specialists in question never get around to recognizing until it’s far too late.